Before There Was ChatGPT...

tl;dr

- Not an overnight breakthrough: Modern LLMs are built on nearly a decade of experimentation, starting with the introduction of the Transformer architecture.

- Conversation pre-existed ChatGPT: GPT-3, BlenderBot, and LaMDA all demonstrated dialogue capabilities before November 2022.

- ChatGPT's real innovation was alignment and interface: The underlying model wasn't radically new. The breakthrough was making it usable through instruction tuning and RLHF.

- The work continues: Reasoning, alignment, and context utilization are active research frontiers with years of development ahead.

- Business implication: If you're treating AI as a moment to react to, you're already behind. It's a trajectory to get ahead of.

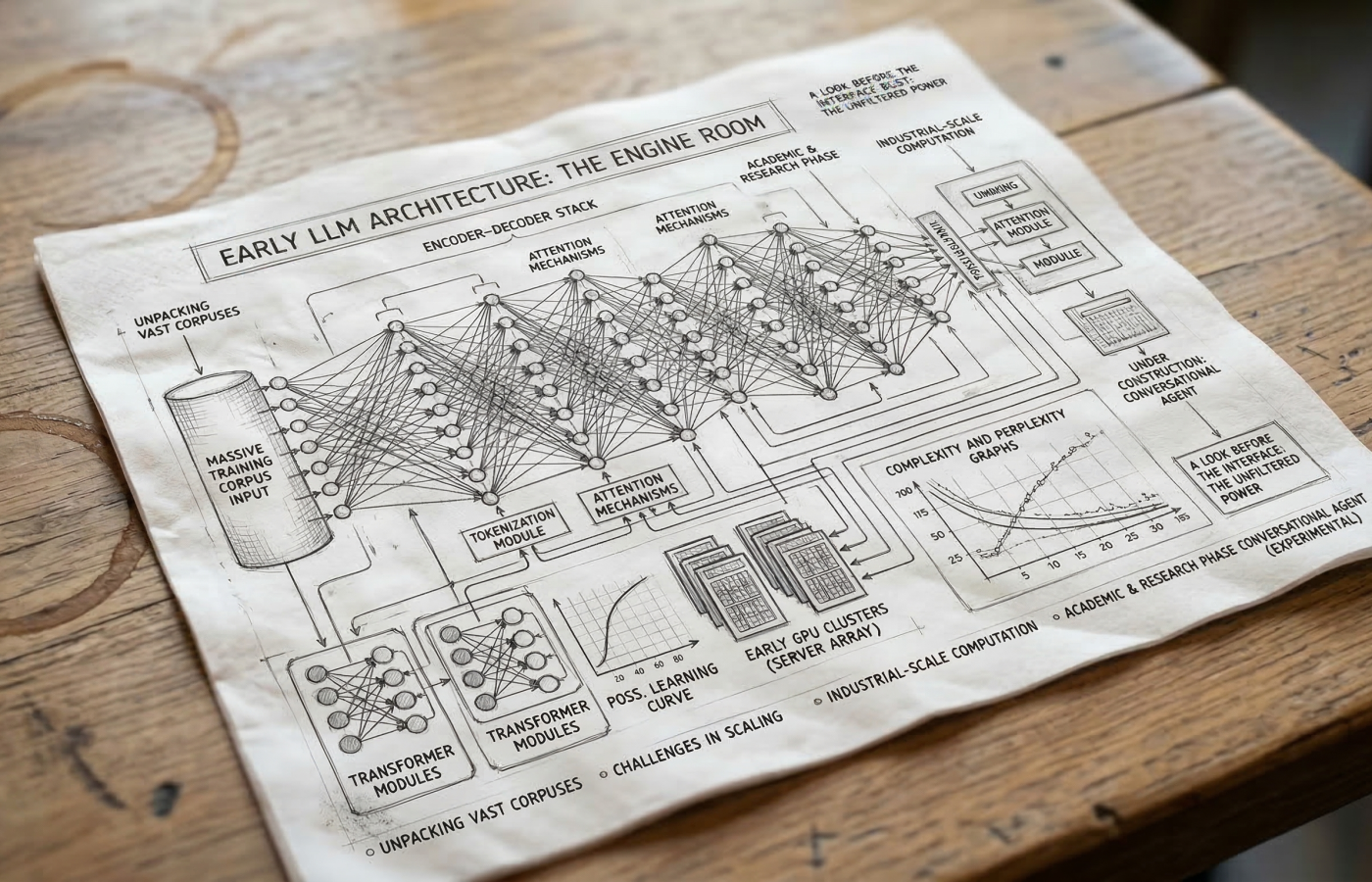

Once upon a time, in 2017, eight Google researchers wrote a paper called "Attention Is All You Need". This paper, which introduced the Transformer architecture, is the foundation for pretty much every modern LLM (large language model) today.

What followed over the next five years was an incredible amount of compounding research and experimentation that most people never saw, until November 2022, AI's iPhone moment.

Note: Prior to 2017, there were neural language models which could generate text and handle tasks like translation, but they were exponentially more difficult to train and scale, and much worse at maintaining long-range context.

Here's what that build-up actually looked like.

Early LLMs Were Mostly Research Tools (2018–2020)

The first modern LLMs appeared with the Transformer architecture and early GPT models.

- GPT-1 (2018) — ~117M parameters

- GPT-2 (2019) — 1.5B parameters

- GPT-3 (2020) — 175B parameters

These models were trained on huge text datasets and could generate coherent paragraphs of text, summarize content, translate languages, and by 2020, GPT-3 could even write code. But they were typically used like this:

Prompt → single output.

They were more like a supercharged autocomplete field than an interactive assistant.

Some Models Could "Converse" Before ChatGPT

Conversational system absolutely existed before ChatGPT.

GPT-3 Playground (2020)

Developers could simulate conversation by formatting prompts manually. But the model was not designed specifically for dialogue. It lost context easily, drifted off topic, hallucinated frequently, and required careful prompt engineering.

Facebook BlenderBot (2020–2022)

Meta created a chatbot specifically for dialogue: casual conversation, answering questions, simple personality. But conversations broke down quickly, factual accuracy was weak, and it sometimes produced harmful content.

Google LaMDA (2021)

LaMDA was built specifically for open-ended dialogue. It could hold conversations reasonably well, and sparked the famous controversy when a Google engineer claimed it was sentient. But it was never public. It stayed a research system.

ChatGPT's Real Innovation: The Interaction

ChatGPT was based on GPT-3.5, which wasn't radically bigger than GPT-3. The big innovation was training for interaction.

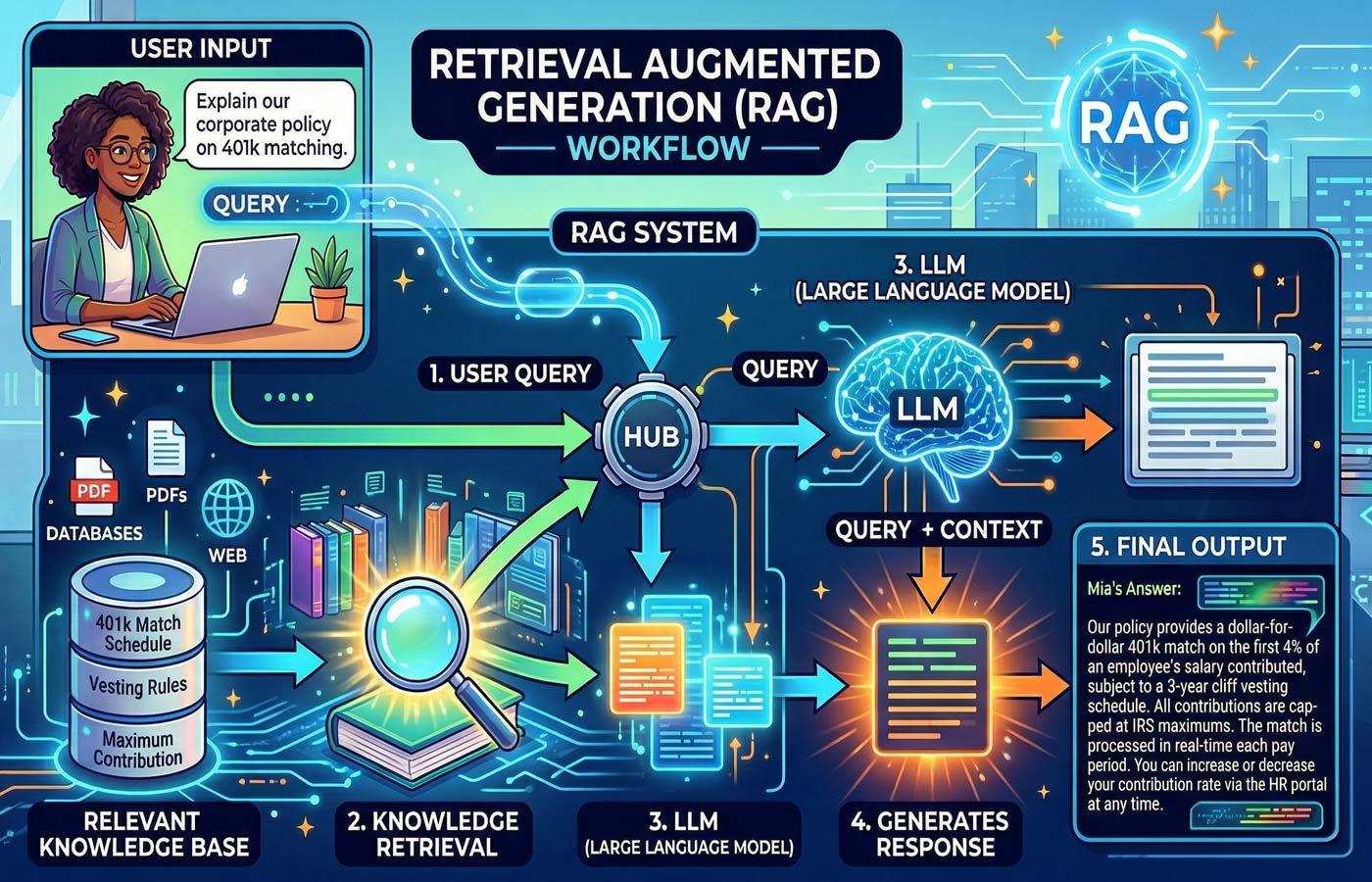

Step 1 — Pretraining. Learn language from massive internet datasets.

Step 2 — Instruction tuning. Humans write prompts and ideal responses. The model learns what helpful answers look like.

Step 3 — RLHF (Reinforcement Learning from Human Feedback). Humans rank competing responses. The model learns what humans prefer.

The result: more coherent answers, less hallucinated output, and much better conversational flow.

The model itself was not entirely new. The big difference was the product experience. ChatGPT introduced persistent conversation history, turn-by-turn dialogue, instruction-following, and an accessible web UI that required nothing more than a browser. This made LLMs feel like an assistant instead of a text generator.

LLMs existed for years. ChatGPT made them usable.

When it launched in November 2022, ChatGPT became the fastest-growing consumer application in history.

Why the Moment Felt Sudden When It Wasn't

The breakthrough was gradual, but three things happened simultaneously in 2022:

- Models became more capable with scale

- Alignment training improved usability

- Chat interfaces made them accessible

That combination created the "overnight" effect. Five years of compounding research became visible to the world all at once.

The Work Is Still Happening

Here's the part many people miss: 2022 was a milestone, not an arrival. The same compounding that built toward ChatGPT is continuing right now. If you compare what ChatGPT was able to do in 2022 with what it can do now, imagine what another 3 1/2 years will do.

Reasoning capabilities are an active frontier: OpenAI's own research shows that even they are still working to understand what these models are doing when they "think." Context utilization remains an open problem. Alignment, making models reliably helpful and safe across real-world conditions, is nowhere near solved.

The people who will be best positioned aren't the ones reacting to what happened in 2022. They're the ones building real understanding of where this trajectory is headed, and making deliberate decisions ahead of the next inflection point.

Final Thoughts

"Attention Is All You Need" in 2017 was the foundation. GPT-2 and GPT-3 proved the scaling potential. LaMDA and BlenderBot showed the industry racing toward dialogue. Instruction tuning and RLHF solved the usability problem. The interface made it real for the world.

That's five years of work that exploded in a moment. And that same compounding is happening right now, in reasoning, alignment, and capabilities that haven't yet been realized. Don't be behind... attention is all you need.

References

- Attention Is All You Need — Vaswani et al., Google Brain, 2017

- Language Models are Unsupervised Multitask Learners — Radford et al., OpenAI, 2019

- Language Models are Few-Shot Learners — Brown et al., OpenAI, 2020

- BlenderBot 3: An AI Chatbot That Improves Through Conversation — Meta, August 2022

- LaMDA: Language Models for Dialog Applications — Thoppilan et al., Google Research, 2022

- Aligning language models to follow instructions — OpenAI, 2022

- Training language models to follow instructions with human feedback — Ouyang et al., OpenAI, 2022

- Introducing ChatGPT — OpenAI, November 30, 2022

- Reasoning models struggle to control their chains of thought, and that's good — OpenAI, March 5, 2026