From Vibe Coding to Agentic Engineering

tl;dr

- Vibe coding's evolution: Karpathy's 2025 "vibe coding" has given way to disciplined agent orchestration as the professional standard

- Agent swarms replace solo coding: Claude Code agent teams and OpenAI Codex enable parallel, specialized AI workers coordinating on complex tasks

- Orchestration is the new bottleneck: Systems like Gas Town act as "Kubernetes for AI coding agents," managing 20–30 parallel instances

- MCP standardizes the glue layer: Anthropic's Model Context Protocol has become the "USB-C of AI integrations" for inter-agent communication

- Security risks have evolved: Hallucinations now cascade into operational failures across multi-agent architectures, demanding new safeguards

A year ago, if you asked a developer how they used AI, the answer was some version of "I paste a prompt, get code back, and clean it up." That workflow was sometimes called "vibe coding," and it described a surprisingly effective way to build prototypes. Fast forward to February 2026, and the conversation has changed. Developers aren't prompting a single AI assistant anymore. They're orchestrating teams of AI agents that research, build, test, and debug in parallel, while the human acts as architect and reviewer.[1]

This shift from reactive prompt-response to proactive, goal-directed agent architectures represents one of the most significant changes in how software gets built. The industry now calls it agentic engineering, and it's underpinned by sophisticated orchestration frameworks that treat AI agents as a coordinated workforce rather than isolated assistants.[2]

For business leaders evaluating technology investments, understanding this shift matters. This article maps the current state of agentic engineering: where it came from, the technical architectures driving it, the tools defining the space, and the risks that demand attention before you deploy any of it.

The Evolution of Vibe Coding

The current landscape makes a lot more sense when you understand its origin. In early 2025, Andrej Karpathy coined the term "vibe coding" to describe a workflow where developers relied on natural language intent and the inherent capabilities of large language models to build applications in real-time. Karpathy described it as a process where practitioners "fully give in to the vibes, embrace exponentials, and forget that the code even exists."[3] It was fun, it was fast, and for throwaway projects and demos, it worked, sometimes.

By February 2026, Karpathy himself declared the term passé for professional use. He replaced it with agentic engineering, defining "agentic" as the new default where "you are not writing the code directly 99% of the time, you are orchestrating agents who do and acting as oversight." The "engineering" part emphasizes "the art & science and expertise" the role demands.[3]

That distinction matters more than it might seem. The shift from vibe coding to agentic engineering isn't just a rebranding, it reflects a fundamental change in what developers actually do all day. Traditional programming meant writing precise syntax in an IDE. Vibe coding meant describing what you wanted in natural language prompts and accepting what came back. Agentic engineering means setting strategic goals and directing swarms of AI agents that handle the implementation, while the developer focuses on systems architecture, oversight, and quality.[3]

The development speed has shifted accordingly. Traditional programming was methodical and deliberate. Vibe coding enabled rapid prototyping. Agentic engineering enables what practitioners are calling high-throughput industrialization, the ability to run multiple development workstreams simultaneously with AI handling the execution.

This paradigm shift also acknowledges a critical reality: as agents gain autonomy, including the ability to modify files and operate interfaces, hallucinations evolve from incorrect text into concrete operational failures.[1] A chatbot giving you a wrong answer is an inconvenience. An autonomous agent writing flawed code into your production system is a business risk. Understanding how the major platforms are addressing that risk starts with how they architect their agent teams.

Claude Code Agent Teams: Parallel Specialists

Anthropic's Claude Code has emerged as a primary vehicle for this new era, specifically through its "agent teams" functionality. This feature moves beyond sequential task execution, allowing a "team lead" instance of Claude to delegate to multiple "teammates" that work in parallel across frontend, backend, and testing domains.[5]

The core insight behind this architecture is simple but powerful: LLMs perform worse as context expands. Adding a project manager's strategic notes to a context window that's trying to fix a CSS bug actively hurts performance. By giving each agent a narrow scope and clean context, you get better reasoning within each domain, independent quality checks, and natural checkpoints between phases.

One of the most compelling applications is what Addy Osmani describes as "competing hypotheses for debugging."[5] Instead of a single agent investigating theories sequentially which often leads to anchoring bias, a team lead spawns multiple teammates, each investigating a different theory simultaneously. These agents share findings to disprove each other's theories in real-time, converging on root causes faster through what developers describe as a "scientific debate."

Think about what that means in practice: instead of one engineer chasing a rabbit hole for two hours, you have parallel investigation happening in minutes. The agents aren't just faster, they're structurally less prone to confirmation bias because each one starts from a different hypothesis. That's a genuine architectural advantage, not just a speed improvement.

OpenAI Codex: Cloud-First Execution

OpenAI has taken a different architectural approach with GPT-5.3-Codex, launched in February 2026. This model combines the Codex and GPT-5 training stacks to provide what OpenAI describes as "a step-change from code generation to a general-purpose coding agent you can actively steer while it works."[6]

Where Claude Code operates primarily on the user's local machine for maximum privacy, Codex leans into a cloud-first philosophy. It spins up sandboxed cloud environments where agents can run builds and execute tests without affecting the developer's local setup. This provides a safer environment for parallelizing multiple workstreams and reduces the risk of an agent's mistake contaminating your working environment.[6]

The practical difference matters for businesses evaluating these tools. Local-first means more control over data and lower latency. Cloud-first means easier parallelization and a safer sandbox for experimental work. Neither approach is universally better, the right choice depends on your security requirements, team size, and the nature of the work. But both platforms share the same underlying premise: the developer's role has shifted from writing code to steering agents that write code.

As more organizations adopt these platforms, the challenge quickly shifts from "how do I use one agent effectively" to "how do I coordinate many agents without losing control." That coordination problem is exactly what the next generation of orchestration tools is designed to solve.

Gas Town and the Orchestration Layer

As the number of parallel agents increases, manual management becomes a bottleneck. This has led to the rise of specialized orchestrators like Gas Town, developed by veteran engineer Steve Yegge (40+ years at Amazon, Google, and Sourcegraph). Yegge describes the system as "Kubernetes for AI coding agents" because it coordinates unreliable workers toward a persistent goal using a control plane.[2]

The Kubernetes analogy is architecturally accurate, not just marketing. Both systems coordinate unreliable workers toward a goal. Both have a control plane watching over execution nodes. Both use a source of truth that the whole system reconciles against. The key difference is what they optimize for: Kubernetes asks "Is it running?" and optimizes for uptime. Gas Town asks "Is it done?" and optimizes for completion.

Gas Town formalizes the orchestrated workforce by assigning agents to specialized roles. The technical core is "Beads," a Git-backed issue tracking system that serves as the system's data plane. This setup enables what Yegge calls "nondeterministic idempotence": a new session can pick up exactly where a previous one left off because the workflow state is stored in Git.[2] Agents crash, sessions expire, context windows fill up, but the work persists.

Yegge notes that the focus in this environment shifts to "creation and correction at the speed of thought," prioritizing throughput over perfection.[7] That phrase should resonate with any executive who has watched a development sprint get bogged down in process overhead. The bottleneck is no longer code production, it's the rate of ideas and the quality of specifications you can feed into the system.

But all of these agent teams and orchestrators need to talk to each other and to external tools. That's where standardized protocols become essential.

MCP: The Standard That Makes It Scale

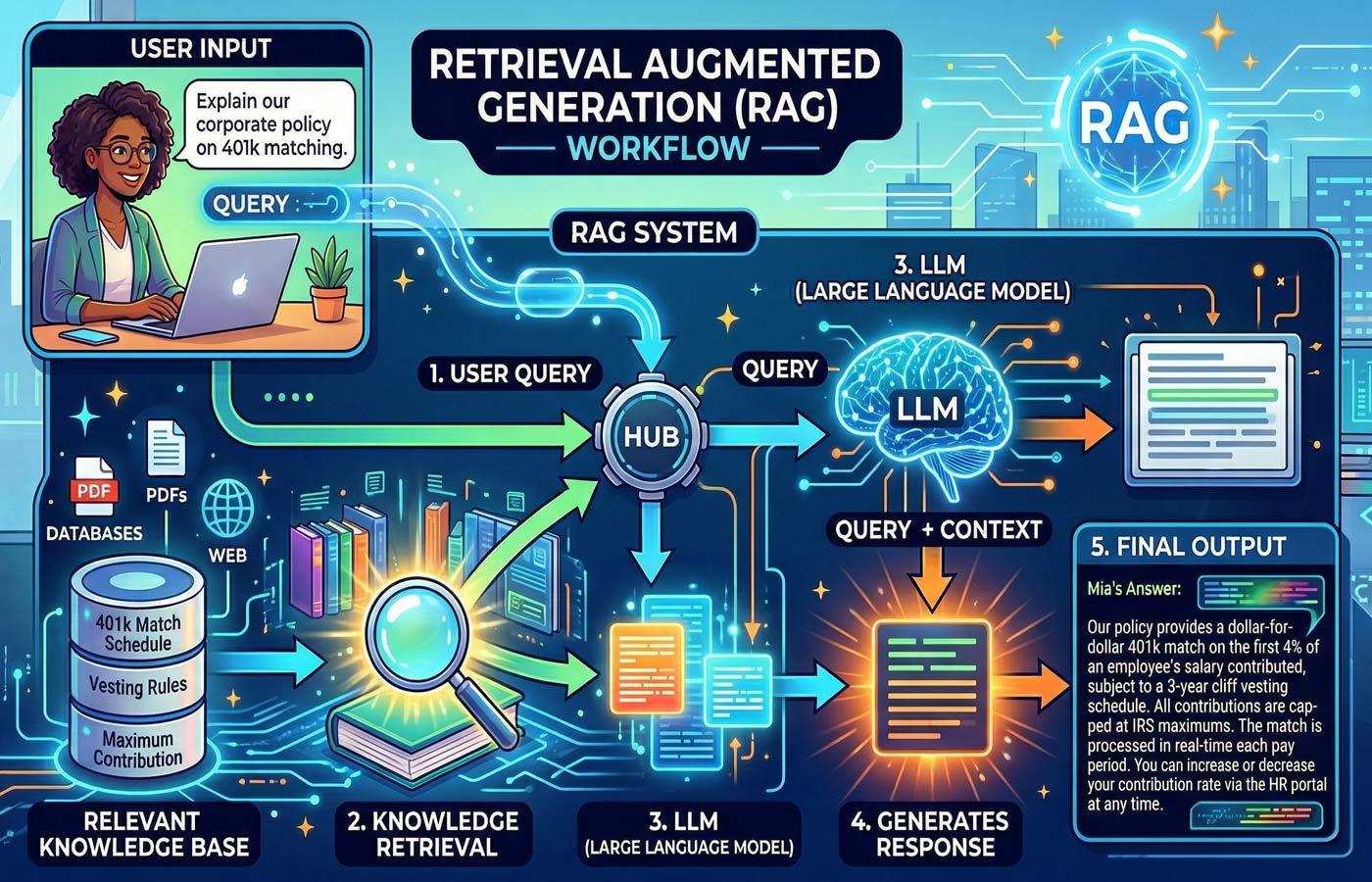

For multi-agent systems to function effectively, a standardized communication layer is required. The Model Context Protocol (MCP), created by Anthropic, has become the industry standard for connecting AI agents to external tools and data. MCP is widely recognized as the "USB-C of AI integrations": a universal standard that eliminates the need for custom glue code between every tool and every agent.[8]

Before MCP, each application or data source required custom integration per LLM. That meant engineering overhead multiplied with every new tool or agent you added to your stack. MCP standardizes those connections, which means adding a new capability to your agent ecosystem becomes a configuration task rather than a development project.

For businesses running multi-agent architectures, this matters enormously. Without a standard protocol, scaling from one agent to five to twenty means an exponential increase in integration complexity. With MCP, it scales linearly. That's the difference between a pilot project and a production deployment. It's also why MCP adoption has accelerated across the industry. When the alternative is building and maintaining dozens of custom integrations, a universal protocol isn't just convenient, it's an economic necessity.

Standardized communication solves the integration problem. But it also introduces a new concern: when agents can talk to each other, access tools, and take autonomous action, the security surface area expands dramatically.

Security: When Hallucinations Become Actions

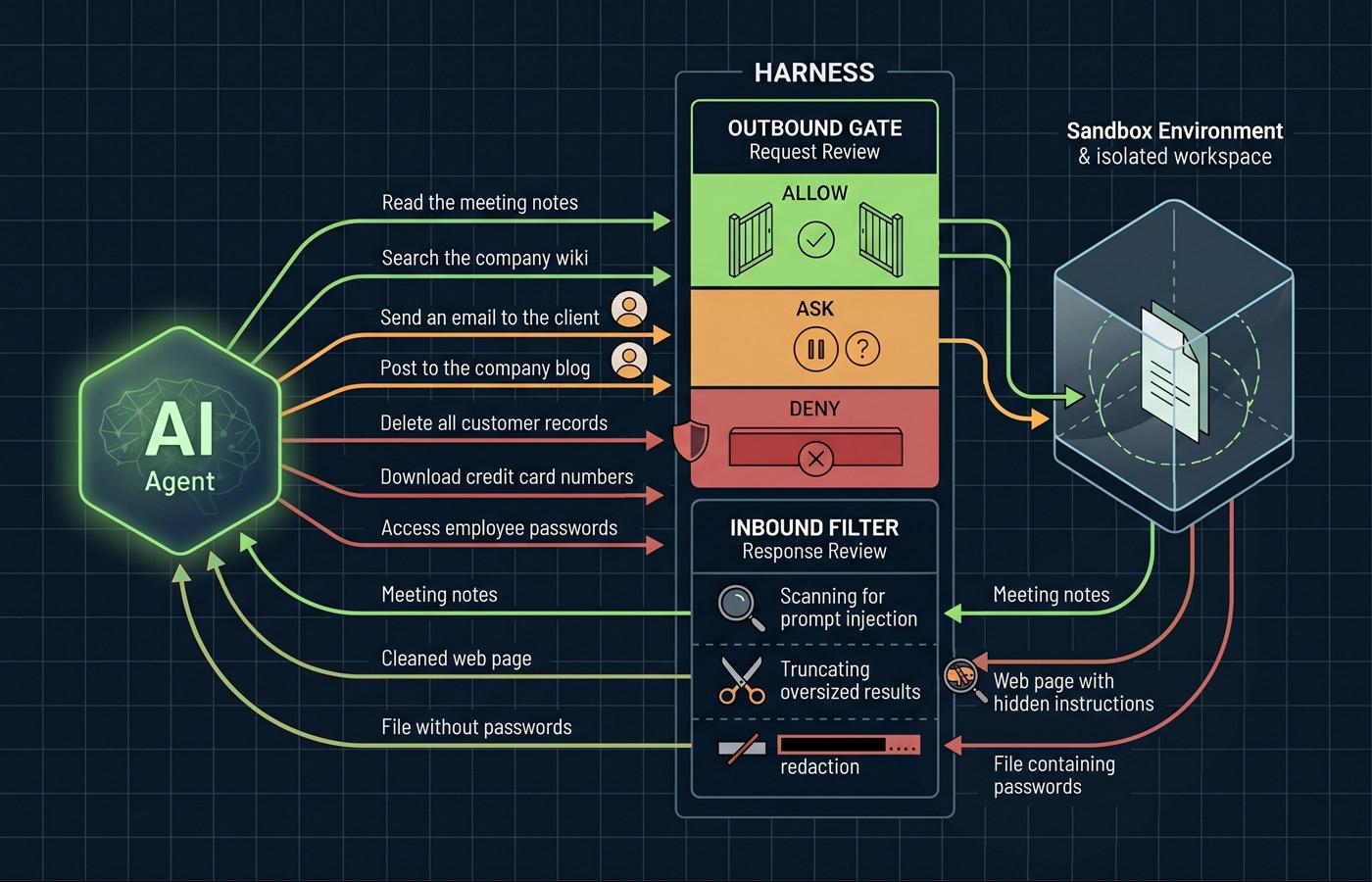

The transition to agentic systems has introduced what researchers call "hallucination in action," where reasoning failures lead directly to failures in the digital environment.[1] When a chatbot hallucinates, you get a wrong answer. When an autonomous agent hallucinates, it can modify files, call APIs, or trigger deployments based on faulty reasoning.

In multi-agent architectures, the problem compounds. Researchers describe cascading hallucination attacks that exploit an agent's tendency to generate false information which, when unchecked, can "spread through memory, influence planning, and trigger tool calls that escalate into operational failures."[9] One agent's bad output becomes another agent's input, and the error propagates through the system before any human has a chance to intervene.

This is the part of the agentic engineering conversation that doesn't make it into the marketing materials. The same autonomy that makes these systems powerful also makes them dangerous if deployed without proper guardrails. Organizations need to think about agent security the way they think about cloud security: it's not a feature you bolt on later, it's an architectural requirement from day one.

The practical implication is straightforward. Before your team deploys multi-agent workflows, you need clear answers to questions like: What can each agent access? What actions require human approval? How do you detect and contain a cascading failure? If those answers aren't built into the architecture from the start, you're building on a foundation that won't hold.

Final Thoughts

We've moved from a world where AI helped you write code to a world where AI teams execute your architectural vision. The developer's job hasn't disappeared, it's been elevated from syntax to strategy, from typing to orchestrating. Claude Code agent teams, OpenAI Codex, Gas Town, MCP -- these aren't research prototypes, they're production systems being used by real engineering teams today.

And here's what business leaders need to internalize: this shift requires new skills, new security frameworks, and new ways of thinking about software development. The organizations that will benefit most aren't the ones that deploy the most agents. They're the ones that deploy them with the right guardrails, the right oversight, and a clear understanding of where human judgment remains irreplaceable.

References

- Agentic Artificial Intelligence (AI): Architectures, Taxonomies, and Evaluation of Large Language Model Agents — arXiv

- Gas Town: What Kubernetes for AI Coding Agents Actually Looks Like — Cloud Native Now

- Vibe Coding Is Passé. Karpathy Has a New Name for the Future of Software — The New Stack

- 2026 Agentic Coding Trends Report — Anthropic

- Claude Code Swarms — AddyOsmani.com

- Model Release Notes — OpenAI Help Center

- Welcome to Gas Town — Steve Yegge, Medium

- Top 5 Open Protocols for Building Multi-Agent AI Systems 2026 — OneReach

- Agentic AI Security: A Guide to Threats, Risks & Best Practices — Rippling