Why We Misunderstand AI Headlines

tl;dr

- "AI" doesn't mean "chatbot": When headlines report 40% efficiency gains from "AI," most people think ChatGPT, leading to misguided expectations and investments

- Your chatbot is not just a chatbot: Modern chatbot interfaces route to multiple specialized AI tools behind the scenes, meaning you may not be using what you think you're using

- It isn't all one technology: The dramatic cost reductions come from specialized AI systems including predictive maintenance, supply chain optimization and fraud detection

Your inbox fills with headlines. AI cuts operational costs by 40%. Competitors automate entire departments. A vendor demo analyzes contracts in seconds.

You implement a chatbot solution. Train the team. Wait for the transformation.

Six months later, the results don't match the headlines. Email composition improved. Meeting summaries work well. But the promised efficiency gains never materialized.

The problem isn't the technology. The problem is your AI implementation is not the same as what you're reading in those headlines.

Misconception #1: AI Means Chatbots

When most readers see "artificial intelligence" in an article, they immediately think of chatbots: ChatGPT, Claude, Gemini. That's the AI they interact with daily, the one making headlines, the one their competitors discuss.

And that's the problem. In today's world, 95% of people read an article about AI and immediately think only of chatbots.

Honestly, the confusion is understandable. ChatGPT's launch in late 2022 created an "iPhone moment" for artificial intelligence. And for most of us, this was our first meaningful interaction with AI. It became the reference point for understanding the entire field. Moreover, media coverage reinforced this singular focus. Early AI headlines were almost exclusively about large language models. GPT, Gemini, Claude; these names have dominated the conversation, and for good reason. The advances in LLMs have been genuinely remarkable, from generating truly production-quality code to passing professional licensing exams, and so on.

But here's what gets lost in the coverage: those LLM breakthroughs are driving innovation across the entire AI landscape. The same research pushing language models forward is fueling new architectures for computer vision, robotics, and scientific discovery. New model types, new software frameworks, and new purpose-built hardware are emerging at an accelerating pace. So when a headline announces "Company Implements AI, Sees 35% Efficiency Gain," readers still assume it's about ChatGPT but the reality is far more diverse, with LLMs typically playing a much smaller role in the overall solution.

This creates two problems. Both costly.

First, business leaders pursue chatbot implementations expecting transformational operational improvements. Large language models excel at language tasks: writing emails faster, generating content, improving customer service, accelerating coding. These are real productivity gains. But they're incremental improvements to knowledge work, not the operational efficiency transformations promised in headlines.

Second, the misconception obscures what's actually happening in AI investment. When you think billions flow into chatbot technology, you might question sustainability. Can language models justify that investment?

They don't have to. Most of that investment isn't going to language models at all.

Misconception #2: Your Chatbot Is Just a Chatbot

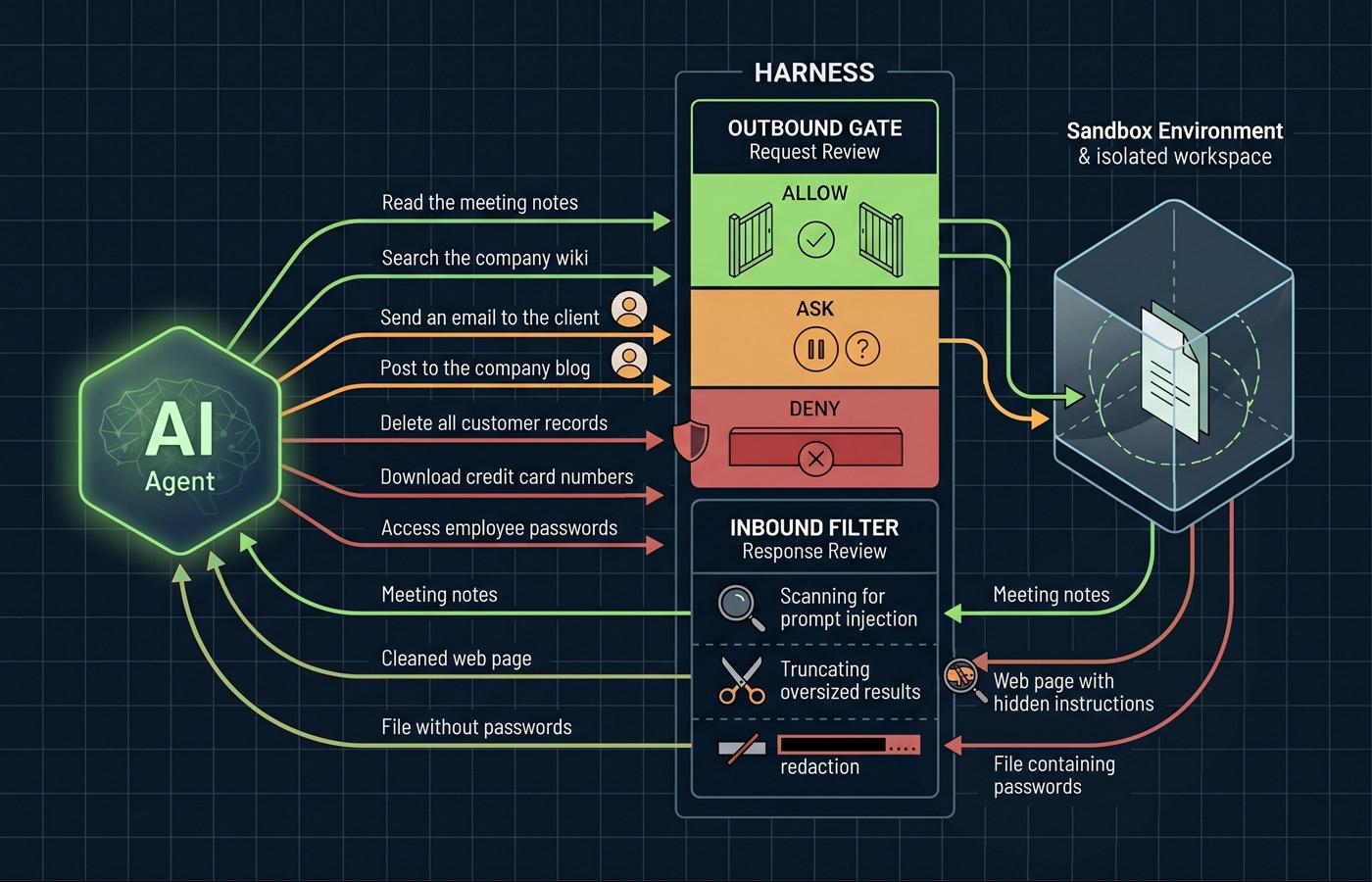

The waters get muddier when you consider that what looks like a chatbot might be using more than just a large language model.

When you ask Gemini to create an image, that's not a large language model working. An LLM is involved in understanding your request and then Google's software routes your request to its Nano Banana image generation software. When you ask it to solve complex math, it's either writing a little program in Python or JavaScript behind the scenes, or it could be using specialized computational tools it has access to.

In this regard, you can think of the chatbot interface like a skilled client manager at a large company. You interact with them primarily. You can tell them your need, and behind the scenes they route your request to the right specialist: accounting, legal, engineering, design. The response comes back to them and they present it to you. You're talking to the client manager the whole time, but different experts are doing the actual work.

This means that when you interact with a modern chatbot, you are probably using specialized AI systems without realizing it. The interface is conversational, but the technology doing the work could be image generation, text-to-speech or speech-to-text, code execution, mathematical computation, or even simply web search (none of which are actually a large language model).

Misconception #3: Chatbots are Creating Incredible Efficiencies in Businesses

But here's the key: the client manager can only route you to specialists in their office. If you need the highly specialized work driving those headline-making efficiency gains, those specialists aren't in the building at all.

The large cost reductions making headlines come from specialized AI systems that most people don't consider when thinking about artificial intelligence. These systems don't chat. They don't write emails. They can't explain their reasoning in natural language. Instead, they analyze sensor data, optimize complex systems, and detect patterns impossible for human operators.

When you read that a manufacturer cut unplanned downtime by 35%, that's not a chatbot analyzing maintenance schedules. That's a specialized AI system that monitors vibration patterns in industrial equipment, detects subtle changes that might indicate a bearing will fail in 48 hours. The system doesn't "understand" language; it understands equipment and physics.

When a headline reports a commercial building operator reduced energy costs by 30%, that's not an LLM optimizing thermostats. That's deep reinforcement learning controlling building systems in real-time, reacting to thermal sensors in milliseconds. This is control system engineering, not natural language processing.

And when a bank announces it prevented billions in fraud losses, that's not ChatGPT reading transaction logs. That's yet another AI application that analyzes the relationships between thousands of transactions simultaneously, flagging suspicious patterns that would take human analysts hours to detect.

These dramatic operational improvements share a common thread: they come from specialized AI systems designed for specific tasks: analyzing sensor data, optimizing complex systems, detecting patterns in numerical relationships. These systems share underlying neural network technology1 with large language models, and just like LLMs, they're advancing at a remarkable pace. New architectures, purpose-built hardware, and expanding research investment are accelerating these specialized systems right alongside the language models grabbing headlines. But they're fundamentally different tools designed for fundamentally different purposes.

Final Thoughts

Information is power. If you don't understand something you're using, you're running the risk of being duped, not maximizing efficiency, or implementing solutions that won't meet expectations. This is part of the reason why we're also reading headlines saying 15% of organizations are seeing zero value from AI investment.

The media uses "AI" as shorthand for a diverse ecosystem of technologies. For business leaders trying to make informed decisions, collapsing that ecosystem into chatbots creates blind spots, misallocated budgets, and missed opportunities. A company investing in chatbots because they read about transformational efficiency gains is probably misunderstanding where those gains come from. Conversely, dismissing AI as overhyped because a chatbot didn't deliver means evaluating the wrong technology against the wrong metrics.

When you read that AI is transforming industries or see billions in investment, take a minute to figure out which type of AI is being discussed. That distinction is the difference between understanding your industry and being misled by headlines. The chatbot revolution is real. Large language models are incredibly powerful productivity tools that will continue improving and providing benefit to people and business for years to come. But they're one piece of a much larger picture.

Understanding the difference isn't just technical knowledge. It's the foundation for making informed decisions about where to invest, what to expect, and how to evaluate whether AI implementations actually deliver value.

- Neural networks are computational systems inspired by the structure of the human brain. They consist of interconnected layers of simple processing units (neurons) that learn to recognize patterns by analyzing large amounts of data. Unlike traditional software that follows explicit programmed rules, neural networks develop their own internal representations of patterns through training. This makes them effective at tasks like recognizing images, understanding speech, predicting equipment failures, or detecting fraud: tasks where the rules are too complex to program explicitly but clear patterns exist in the data.